The digital landscape for law firms is undergoing a seismic shift.

As artificial intelligence becomes increasingly entwined with search engines, the need for an AI-ready technical SEO audit has never been more urgent. AI-driven search technologies are reshaping how information is discovered, accessed, and ranked, moving beyond simple keyword queries to comprehend the context and nuances within digital content. Thus, for any law firm aspiring to maintain a robust online presence and visibility, the convergence of traditional SEO and AI-readiness is not just recommended but essential.

In 2026, the evolution of search engine algorithms is at a pivotal moment, with AI taking the lead. Law firms that fail to capitalize on this new wave of SEO risk being overshadowed by competitors that embrace and integrate AI-focused strategies. It is crucial to adapt by understanding the intricacies of both traditional and AI-driven search environments. Such knowledge will enable law firms to leverage technical SEO audits that align with the needs of machine-led content discovery and understanding.

An AI-ready technical SEO audit involves a comprehensive examination of several critical elements. These include ensuring proper crawler access, effective JavaScript rendering, and the strategic use of structured data like JSON-LD, which has been adopted by 53% of top websites W3Techs Report 2026.

The goal is to ensure that AI crawlers can efficiently parse and cite your online content. Furthermore, creating machine-readable content is vital for AI systems to reference effectively. This article will guide you through a detailed audit checklist, empowering law firms to enhance their visibility across both conventional and AI-driven search domains.

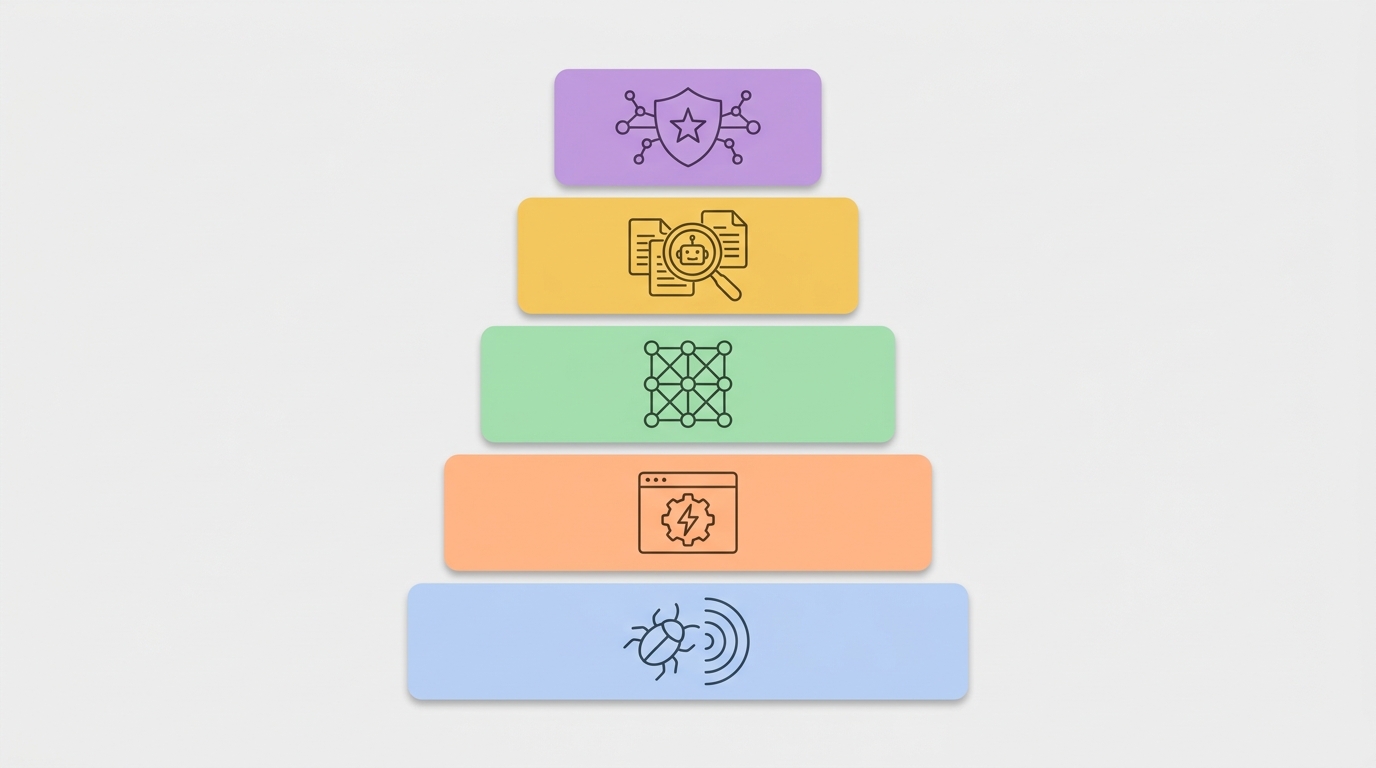

AI-ready technical SEO audit: the five core layers

This checklist breaks the audit into five technical layers. It aligns traditional SEO with AI-driven search. Each layer contains specific tests and remediation steps. Follow the order because the first layers enable later ones.

Layer one crawler access and indexability

Ensure AI crawlers can reach and read your content. Start with robots.txt and server responses. Then verify sitemap and fetch status. Use these checks:

- Validate robots.txt rules for major crawlers and user agents. Include Googlebot, GPTBot, ClaudeBot, and others. Be explicit about path allowances and disallows. Because AI crawlers vary, aim for permissive defaults on public content.

- Confirm server response codes through curl or server logs. Fix 4xx and 5xx errors quickly. Ensure canonical URLs return 200 status.

- Publish and maintain XML sitemaps. Include HTTPS canonical links. Submit sitemaps to search and AI indexing endpoints.

- Monitor crawl budget and request throttling. Use server logs to measure AI crawler patterns. Adjust rate limits and IP blocks accordingly.

Layer two JavaScript rendering and delivery

AI crawlers differ in rendering capabilities. Some read static HTML only. Therefore ensure critical content renders for agents that do not execute JavaScript.

- Prefer server-side rendering (SSR) or static site generation (SSG) for single page applications. This ensures visibility for GPTBot and PerplexityBot.

- If client rendering remains, implement dynamic pre rendering or hybrid rendering. Test with curl and View Source. Confirm important text appears in initial HTML.

- Use Playwright or an automated rendering test to replicate major AI crawlers. Capture screenshots and page HTML for verification.

- Minimize render blocking scripts and inline critical CSS. Improve Time to First Byte and First Contentful Paint.

Layer three structured data and schema markup

Structured data makes meaning explicit for AI systems. JSON-LD is the preferred format and widely adopted. Therefore add rich markup to legal pages.

- Implement JSON-LD for attorneys, law firm, practice areas, and legal services. Use schema markup roles such as Organization and Person.

- Validate markup with structured data testing tools. Fix errors and warnings promptly. Reference W3Techs adoption metrics for context.

- Include GEO data and statistics where relevant. Data density increases AI visibility and citations, per Yext analysis.

- Keep markup consistent with visible page content. Do not misrepresent facts, because AI agents cross check machine readable and visual signals.

Layer four how AI agents read and surface content

Design content for machine readability and hierarchy. AI systems rely on clear headings and accessibility trees. Therefore audit the accessibility layer.

- Verify semantic HTML structure and heading order. Use H1 then H2 sequence and avoid flattened layouts.

- Audit the accessibility tree and ARIA usage. WebAIM reports average accessibility errors. See WebAIM for metrics.

- Ensure visible text, metadata, and JSON-LD convey the same claims. AI citations favor corroborated and structured content.

- Provide canonical excerpts and summary blocks for long legal articles. These assist snippet generation and AI citations.

Layer five AI content discovery and evaluation signals

Optimize signals that help AI systems discover and trust your content. These include backlink quality, data richness, and behavioral metrics.

- Increase data density on local and practice area pages. Add case counts, outcomes, and regional statistics with sources.

- Maintain a clean machine readable identity via llms.txt and site metadata. This helps search crawlers and user triggered agents.

- Monitor citation frequency and external references. Rich sites earn more AI citations according to Yext research.

- Track crawler types and proportions in server logs. Use patterns to prioritize pages for SSR or increased markup.

Each layer contains technical tests and concrete fixes. Use this checklist to run a systematic AI-ready technical SEO audit. The approach ensures law firms remain visible across traditional and AI-driven search.

Impact of emerging AI crawlers and traffic patterns on law firm SEO

AI crawler activity now forms a material portion of web traffic. Cloudflare reports that bots generated 30.6 percent of all web traffic in Q1 2026. Therefore law firms must treat crawler behavior as a core SEO signal. Below are the critical trends and what they mean for legal websites.

Key traffic breakdown

- Bots accounted for 30.6 percent of web traffic in Q1 2026 (Cloudflare). Because this share is large, non human consumers directly affect indexing and content reuse.

- Distribution of AI crawler types: 89.4 percent training crawlers, 8 percent search crawlers, 2.2 percent user triggered agents. Thus most requests aim at model training and bulk data collection.

Why this matters for law firms

- Visibility shifts from human keyword matches to machine readable signals. AI systems prioritize structured, reliable data when citing legal information.

- Training crawlers ingest large volumes of content. Therefore poor structure or hidden content reduces the chance AI systems will learn from your pages.

- User triggered agents fetch content at the moment of query. Thus providing concise, machine friendly summaries improves adoption in conversational answers.

Notable crawlers and rendering behavior

| Crawler | Typical purpose | Rendering capability |

|---|---|---|

| GPTBot | Training and data collection | Static HTML only |

| ClaudeBot | Training and retrieval | Static HTML only |

| PerplexityBot | Search and answer generation | Static HTML only |

| CCBot | General crawling | Static HTML only |

| Major search crawlers (eg Googlebot) | Indexing and ranking | Renders JavaScript |

Because four of six major crawlers fetch static HTML only, law firms must ensure critical legal content appears in initial HTML. This avoids missed content for many AI agents.

Operational impacts and recommendations

- Audit server logs for crawler patterns. Identify spikes from training agents and prioritize pages they request.

- Ensure robots.txt allows access to pages you want AI agents to learn from. However block low value or duplicate content.

- Prefer SSR or SSG for legal resources and attorney bios. If you must use client side rendering, provide prerendered snapshots.

- Supply high quality structured data and consistent visible text. AI systems cross check both machine readable and visible signals when citing.

- Improve data density on practice pages. Yext finds data rich sites earn more AI citations (Yext). Therefore include GEO data and case statistics where appropriate.

- Audit accessibility and the accessibility tree. WebAIM reports rising accessibility errors, which correlate with weaker machine readability (WebAIM).

In short, AI crawler trends change how law firms win visibility. By monitoring crawler types and optimizing for machine readable structure, legal sites improve indexing and increase the likelihood of AI citations.

AI-ready technical SEO audit: Traditional SEO vs AI-ready elements

Below is a compact comparison table for law firms. It explains how audits evolve to support AI crawlers and machine readers. Use the table to prioritize technical fixes.

| Element | Traditional SEO audit | AI-ready technical SEO audit (law firms) |

|---|---|---|

| Crawler types | Focus on search crawlers like Googlebot and Bingbot | Includes training crawlers, search crawlers and user-triggered agents such as GPTBot and ClaudeBot |

| Rendering methods | Verify JavaScript indexing and client side rendering where applicable | Prefer server-side rendering or static site generation and provide prerendered snapshots for agents that read static HTML |

| Structured data | Add schema markup for core entities; JSON-LD used variably | Deploy extensive JSON-LD for attorneys, organizations, practice areas and GEO data to increase AI citations |

| Content evaluation signals | Rank, backlinks, and click through rates drive decisions | Emphasize data density, source citations, canonical excerpts and machine readable summaries for AI agents |

| Indexing focus | Canonicals, sitemaps and robots handling for human search | Indexability plus machine readable identity such as llms.txt and citation ready markup for AI systems |

| Accessibility and ARIA | Often audited for compliance and usability | Audit the accessibility tree and ARIA to improve machine readability and heading hierarchy |

| Crawl control and robots.txt | Block or limit bots to conserve crawl budget | Allow major AI crawlers access to high value pages and block low value agents selectively |

| Performance metrics | Core Web Vitals and page speed targets | Same metrics plus fast TTFB and render time for first meaningful HTML delivery |

Run this comparison during your next audit. Because AI agents learn from machine readable signals, adjust priorities accordingly.

CONCLUSION

Adopting an AI-ready technical SEO audit is no longer optional for law firms that seek consistent online visibility. AI-driven search changes indexing and citation behavior, and traditional SEO alone cannot guarantee discovery. Therefore firms must run unified audits that combine crawler access, rendering checks, structured data, readability, and discovery signals. This approach reduces blind spots and increases the likelihood of AI citations.

Start audits with server logs and structured data validation. However, prioritize pages that drive client conversions. A unified audit strategy prepares legal websites for ongoing search technology changes. Because AI systems learn from machine readable signals, legal content should be structured and consistent. Furthermore, server-side rendering and JSON-LD reduce the risk of missed content by static-reading crawlers. Thus, a systems-level audit closes gaps between human and machine indexing. Measure citation frequency and adjust content density accordingly.

Case Quota provides specialized legal marketing services built for these challenges. Visit Case Quota to learn more about high-level strategies used by Big Law firms. Their approach scales advanced tactics to help small and mid-sized firms achieve market dominance. Consequently, law firms that adopt AI-ready audits and partner with experienced agencies gain a sustainable visibility advantage. Partner with a technical SEO team for execution and monitoring. Act now. Begin today.

Frequently Asked Questions (FAQs)

What is an AI-ready technical SEO audit?

An AI-ready technical SEO audit is a systems level review. It checks crawler access, JavaScript rendering, structured data, and AI reading signals. Therefore it prepares sites for both traditional crawlers and AI agents.

Why must law firms prioritize this audit?

AI crawlers change indexing and citation behavior. Because bots now account for a large share of traffic, firms need machine readable content. Thus audits protect visibility and increase AI citation chances.

What practical steps should I run first?

Start with server logs and robots.txt. Next test rendering with curl and prerendering tools. Then add JSON-LD and validate schema markup. Finally audit the accessibility tree and canonical excerpts.

How often should firms run the audit?

Run a full audit quarterly. However test critical pages after major releases. Also monitor server logs continuously for new crawler patterns.

What common challenges appear and how do you fix them?

Common issues include hidden content, broken structured data, and client side only rendering. Fix these by implementing SSR or SSG, correcting JSON-LD, and aligning visible text with markup.