AI-ready content architecture: a practical blueprint for law firm search in the AI era

AI-ready content architecture frames how law firms should structure public facts so AI agents find, validate, and surface them. Because search is shifting from links to facts, law firm SEO now demands machine-readable signals. Therefore this introduction explains why firms must move beyond traditional sitemaps and page-level optimization.

The core idea centers on creating deterministic inputs for large language models and retrieval systems. In practice, that means structured data like JSON-LD, clear entity relationship mapping, versioned content API endpoints, and provenance metadata on every public fact. Moreover, these layers reduce hallucination risk while improving visibility in AI-driven responses. As a result, firms that adopt this approach can expect more accurate AI Overviews and better attribution for legal content.

For law firms, stakes differ from other industries. Clients expect precise, current legal guidance, and search signals that favor unverified summaries can create liability. Therefore legal marketers should prioritize machine-validated facts and a verified source pack. Practically speaking, the minimum viable implementation includes a JSON-LD audit and upgrade, an authenticated content endpoint for FAQ and practice notes, and timestamps with authorship metadata on facts.

This article takes a technical and analytical approach. First, it maps the four-layer framework that yields clean, authoritative brand access for AI agents. Second, it outlines implementation priorities for midsize and enterprise firms. Finally, it previews standards like Model Context Protocol and how provenance metadata will change ranking signals. In short, AI-ready content architecture is not an abstract ideal. Instead, it is a prescriptive, measurable infrastructure law firms can begin building this quarter to future-proof search visibility.

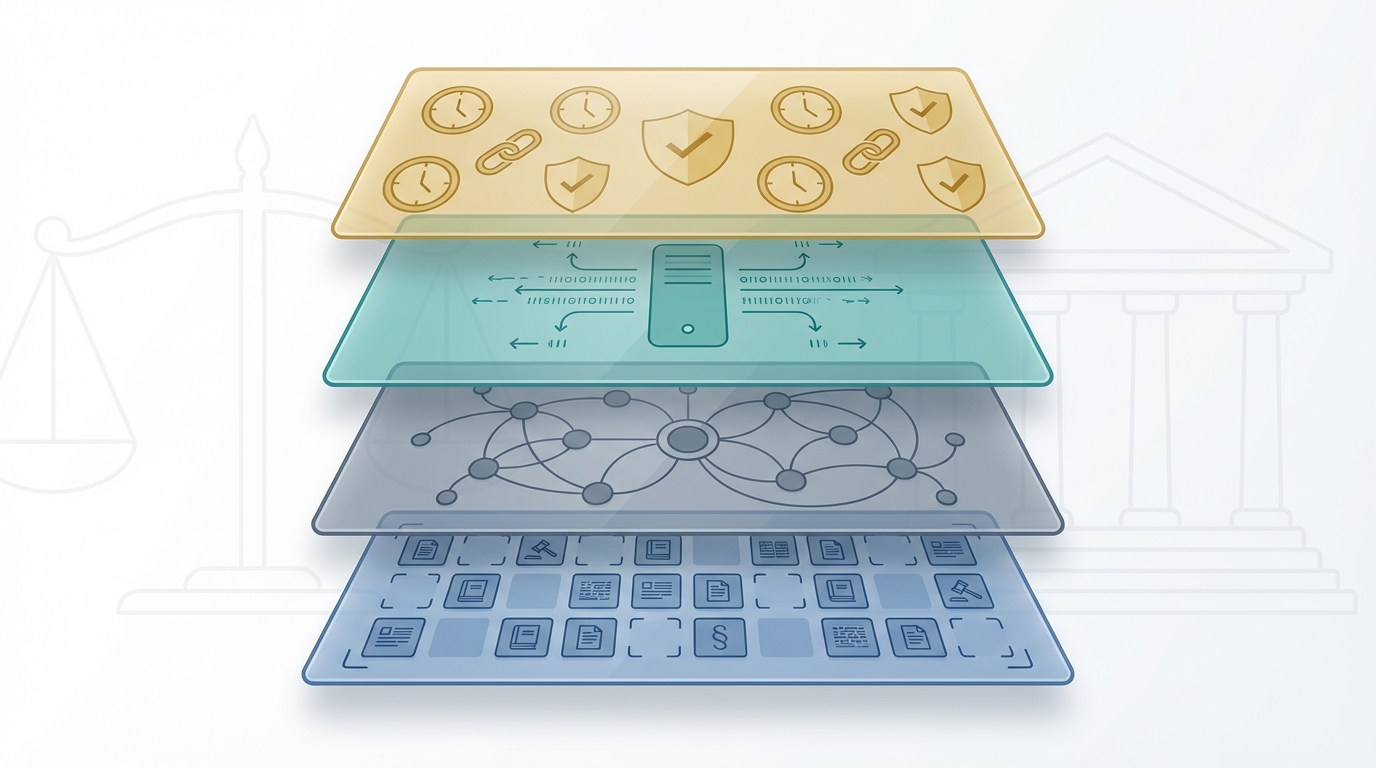

AI-ready content architecture: the four layers explained

This section breaks down the four-layer AI-ready content architecture. It explains what each layer does. It also gives prescriptive steps law firms can implement. The goal remains the same. Give AI agents clean, authoritative access to your brand. As one concise summary puts it, “The four-layer framework for giving AI agents clean, authoritative access to your brand.”

Layer one: structured data with JSON-LD and Schema.org

Start with structured data. Use JSON-LD to mark Organization, Service, Product, and FAQPage schemas. This approach follows long-standing best practice from Schema.org. Google also recommends JSON-LD as its preferred format. Implementing JSON-LD makes facts machine-readable. As a result, pages with valid structured data are 2.3 times more likely to appear in Google AI Overviews. Therefore run a JSON-LD audit first. Then fix missing or malformed schemas. Use the @id graph pattern to interlink Organization, Product, Service, and FAQPage entities. This pattern helps vector retrieval and graph-based indexing.

External resources

Layer two: entity relationship mapping and graph data

Next, map relationships between entities explicitly. Entity relationship mapping expresses how practice areas connect to attorneys, case types, and outcomes. Use a graph data model to show those links. This practice improves AI retrieval quality because models can follow entities instead of guessing. For law firms, model relationships for practice areas, jurisdictions, fee structures, and lead sources. Also map integrations such as partner firms or referral channels. Because retrieval systems favor clear structure, invest in a persistent entity graph. Use consistent identifiers and canonical URLs for each node.

Layer three: versioned content API endpoints

Then expose versioned content endpoints for FAQs, documentation, and practice notes. A stable API prevents stale answers. It also enables audit logging and access control. Therefore publish endpoints with semantic routes, version tags, and ETag support. For example, provide /api/v1/faqs and /api/v1/practice-notes endpoints. Versioning matters because AI agents cache facts. As a result, you can roll back incorrect statements and track updates. The Verified Source Pack becomes machine-validated when the content API exists.

Layer four: provenance metadata on every fact

Finally, add provenance metadata to each fact. Include timestamps, authorship, and source chains. Provenance reduces hallucination risk. It also enables traceability when AI systems synthesize answers. As one cautionary note explains, “a flat list with no provenance metadata is exactly the kind of input that produces confident-sounding but inaccurate outputs.” Therefore tag each exposed fact with who wrote it, when, and where it was published.

Role of XML sitemaps and sitemap best practices

Do not abandon sitemaps. XML sitemaps remain useful for discovery. However treat them as a discovery layer, not the authoritative fact source. Keep sitemaps lean. Aim to keep each sitemap well under fifty thousand lines when possible. Also apply organization to sitemaps by content type and update frequency. Avoid overcomplicating the sitemap architecture, because unnecessary complexity adds operational risk. In practice, combine clean XML sitemaps with JSON-LD graphs and the versioned content API.

Standards and next steps

Prepare for Model Context Protocol adoption. MCP provides a standardized way for AI systems to fetch external facts. As MCP gains traction, your four-layer work will become more interoperable. Start with the minimum viable implementation this quarter. Ship the JSON-LD audit, a structured content endpoint, and provenance metadata on public facts. This approach gives measurable gains now and future-proofs your search visibility.

| Sitemap size | Typical URL count | SEO indexing impact | AI visibility impact | Practical management | Recommended best practices | Notes and references |

|---|---|---|---|---|---|---|

| Small (less than 5k) | Up to 5,000 | Fast discovery and high crawl efficiency. Prioritizes high-value pages. | Moderate if no structured data. High when paired with JSON-LD and Schema.org. | Low overhead. Easy to audit and maintain manually. | Keep sitemap focused. Include canonical URLs. Pair with JSON-LD and @id graph links. | Aligns with advice to keep sitemaps lean and focused. Use @id graph pattern to interlink entities. |

| Medium (5k to 50k) | 5,000 to 50,000 | Balanced indexing. Supports broader coverage without heavyweight tooling. | Better when structured signals exist. Pages with valid structured data are 2.3x more likely to appear in Google AI Overviews. | Requires logging, routine audits, and validation tooling. | Segment by content type. Use changefreq and lastmod. Keep each sitemap well under 50k lines. | “Keeping a sitemap to well under 50k lines ensures better indexing.” Use sitemaps as discovery, not authoritative fact sources. |

| Large (over 50k) | 50,000+ or many files | Risk of crawl inefficiency. May require sitemap index and prioritized feeds. | Lower baseline visibility if the structure is noisy. However visibility improves when combined with a content API and provenance metadata. | High operational cost. Needs automation, CI checks, and monitoring. | Use sitemap indexes and prioritized feeds. Expose versioned content API endpoints and ETags. | Treat sitemaps as a discovery layer only. Add provenance metadata to facts and rely on structured content endpoints for authoritative answers. |

Platform choices and AI-ready content architecture: tools that change visibility

Platform choices shape how AI systems find and trust law firm content. Therefore pick systems that expose clean structured data and stable APIs. Because retrieval models rely on consistent signals, your stack must prioritize JSON-LD, content API endpoints, and clear provenance.

Start with JSON-LD and Schema.org markup. Implement Organization, Service, Product, and FAQPage schemas. Google favors JSON-LD, and Schema.org remains the shared vocabulary. For implementation guidance, see Schema.org and Google Developers Structured Data Guide. When you standardize markup, AI retrieval pulls discrete facts instead of guessing.

Next, choose a platform with robust content API support. A CMS that only renders HTML adds friction for agents. Conversely, systems that offer versioned JSON endpoints make facts auditable. Therefore prefer platforms that support /api/v1 routes with ETag headers and semantic URLs. As Duane Forrester notes, “The minimum viable implementation, one you can ship this quarter without betting the architecture on a standard that may shift, is three things.” Those three things include a JSON-LD audit, a structured content endpoint, and provenance metadata.

Consider the CDN and edge stack. Cloudflare provides fast, programmable edge layers that simplify exposing structured JSON safely. See Cloudflare. Moreover, edge functions can normalize JSON-LD and inject @id graphs at the CDN layer. As a result, you can centralize transformation logic and reduce CMS dependency.

Evaluate third-party CMS trade-offs carefully. Traditional platforms that deliver flexible APIs work well. However some proprietary stacks may lock your data and complicate provenance. For example, if your CMS does not provide stable canonical URLs or content versioning, downstream AI systems will struggle to attribute facts correctly. Therefore audit any vendor for API stability, canonical management, and JSON-LD support before choosing.

Mind vector index hygiene. When you feed content into vector stores, cleanse duplicate facts and normalize entity identifiers. Otherwise, noisy vectors produce poor retrievals. As a best practice, create a canonical entity ID scheme and enforce it across JSON-LD, the content API, and your vector pipeline. This step reduces hallucination risk and improves precision for AI Overviews.

Prepare for Model Context Protocol adoption. MCP promises a standard way for models to fetch external data. For reference, see Model Context Protocol and MCP documentation at MCP Documentation. Because MCP has growing support, design your API with simple adapters. This approach lets agents request scoped facts directly and preserves provenance.

Finally, align operational processes with technical choices. Create CI checks that validate JSON-LD against Schema.org. Automate API contract tests and enforce ETag and lastmod headers. Also log changes to facts and authorship so you can prove provenance. As a result, law firms gain measurable increases in visibility and trust.

In summary, combine JSON-LD, versioned content APIs, careful CMS selection, and vector hygiene. Moreover, use edge tooling like Cloudflare and plan for MCP adapters. Doing so makes your AI-ready content architecture resilient and future-proof.

Conclusion

AI-ready content architecture is the operational foundation for law firm visibility in the AI era. It combines structured data, entity relationship mapping, versioned content APIs, and provenance metadata. Together these layers give retrieval models deterministic, auditable signals and reduce hallucination risk. Because clients demand precise legal guidance, incorrect AI summaries carry reputational and legal risk. In short, the architecture aligns legal accuracy with search visibility.

Start small and iterate. Therefore begin with a JSON-LD audit, a structured content endpoint, and provenance tags. This minimum viable implementation delivers measurable gains quickly. However, do not attempt all layers at once. Instead sequence work to deliver value fast, and use the architecture to guide prioritization.

Prioritize vector index hygiene and canonical entity IDs when building graph data. Also enforce API versioning, ETag support, and CI checks for JSON-LD validity. Moreover log authorship and timestamps for each exposed fact. Also normalize entity identifiers across JSON-LD and your vector pipeline. These steps make your facts traceable and machine-validated, which improves AI Overviews and reduces hallucinations.

Choose platforms that expose machine-readable facts via stable APIs. Cloud, CDN, and edge functions can centralize transformation logic and normalization. Moreover evaluate CMS vendors for API stability, canonical URL control, and provenance support. Prepare for Model Context Protocol adoption to remain interoperable with major providers. Because OpenAI, Google, and Microsoft align on retrieval standards, build adapters now to protect future visibility.

Start this quarter and measure impact. Case Quota helps law firms adopt Big Law strategies and technical execution to dominate search. Visit Case Quota to explore services and case studies. Measure outcomes with AI Overviews, assisted conversions, and reduced support load. Therefore set KPIs and track provenance-backed citations in AI responses. This approach yields defensible, scalable search visibility for legal brands.

Frequently Asked Questions (FAQs)

What is AI-ready content architecture and why does my law firm need it?

AI-ready content architecture is a four-layer approach that makes facts machine-readable. It uses JSON-LD, entity relationship mapping, versioned content APIs, and provenance metadata. Because AI retrieval systems prefer deterministic inputs, this architecture reduces hallucinations and improves visibility. For law firms, precise and current legal guidance demands these controls.

What is the minimum implementation and how quickly can we ship it?

Start with a JSON-LD audit, a structured content endpoint, and provenance metadata on public facts. Ship these three items this quarter to measure impact quickly. This minimum viable implementation is actionable and durable. As Duane Forrester advises, start small and iterate.

How should we use XML sitemaps alongside structured data?

Keep XML sitemaps lean and focused on discovery. Use sitemaps to point crawlers to high-value URLs. However treat JSON-LD graphs and content APIs as authoritative fact sources. Therefore combine both rather than rely on sitemaps alone.

Which platforms and tools should we prioritize?

Prioritize platforms that expose stable APIs and JSON-LD support. Also use edge tooling to normalize structured data. In addition maintain vector index hygiene and canonical entity IDs. This reduces noise in embeddings and improves retrieval precision.

How will standards like Model Context Protocol and provenance affect the future?

MCP will standardize how models fetch external facts. Consequently design APIs with simple MCP adapters. Also publish timestamps and authorship so agents can verify claims. As a result, provenance and MCP adoption will make AI-driven answers more traceable and more likely to cite your firm.