Platform-driven SEO and AI visibility: Why platform choices and AI crawl strategy decide law firm rankings

Platform-driven SEO and AI visibility define whether a law firm ranks in traditional search and appears in AI recommendations. In short, platform defaults and bot access shape visibility fast. Therefore this introduction lays out CMS and technical SEO audits, AI visibility and crawl strategy, and the contrast between digital PR and press releases. We adopt a data-driven, analytical and cautionary voice. Because legal practices face regulatory risk and high competition, platform decisions matter more than ever.

Start with CMS and technical audits. Audit platform defaults in WordPress, Squarespace, Shopify and similar systems because defaults can silently harm SEO. For example, improper canonical tags, lazy image loading defaults, or multiple H1 tags reduce indexability and rank. As a result, an audit must check canonical usage, meta robots, llms.txt defaults, structured data, and Core Web Vitals. We recommend documenting constraints and building workarounds when platforms block necessary changes.

Next address AI visibility and crawl strategy. Configure robots.txt and llms.txt thoughtfully because GPTBot and ClaudeBot follow those signals. Moreover, blocking bots without analysis can cut your presence in AI-driven answers. Therefore choose allowlists and rate limits carefully. Also consider how AI citations favor editorial, linked mentions, and canonical sources. Consequently you must protect authored legal content and monitor AI citation patterns.

Finally compare digital PR and press releases. Data shows earned editorial coverage drives far more AI citations than syndicated press releases. However many firms still invest in distribution services with low AI impact. For law firms, prioritize earned mentions, expert commentary, and linkable resources. As a result you build signals that both classic search and AI recommenders value.

This article will follow with technical checklists, crawl configuration templates, and PR tactics grounded in current datasets. In addition readers will get practical remediation steps and risk controls. Read on to avoid platform traps and to secure AI visibility for your firm.

Platform-driven SEO and AI visibility: Audit CMS defaults and implement workarounds

Choosing a CMS sets guardrails for search performance and AI discovery. Therefore a rigorous audit of platform defaults is the first step. Law firms must treat CMS selection as a technical decision. Otherwise default behaviors can silently block indexation, degrade performance, and reduce AI citations.

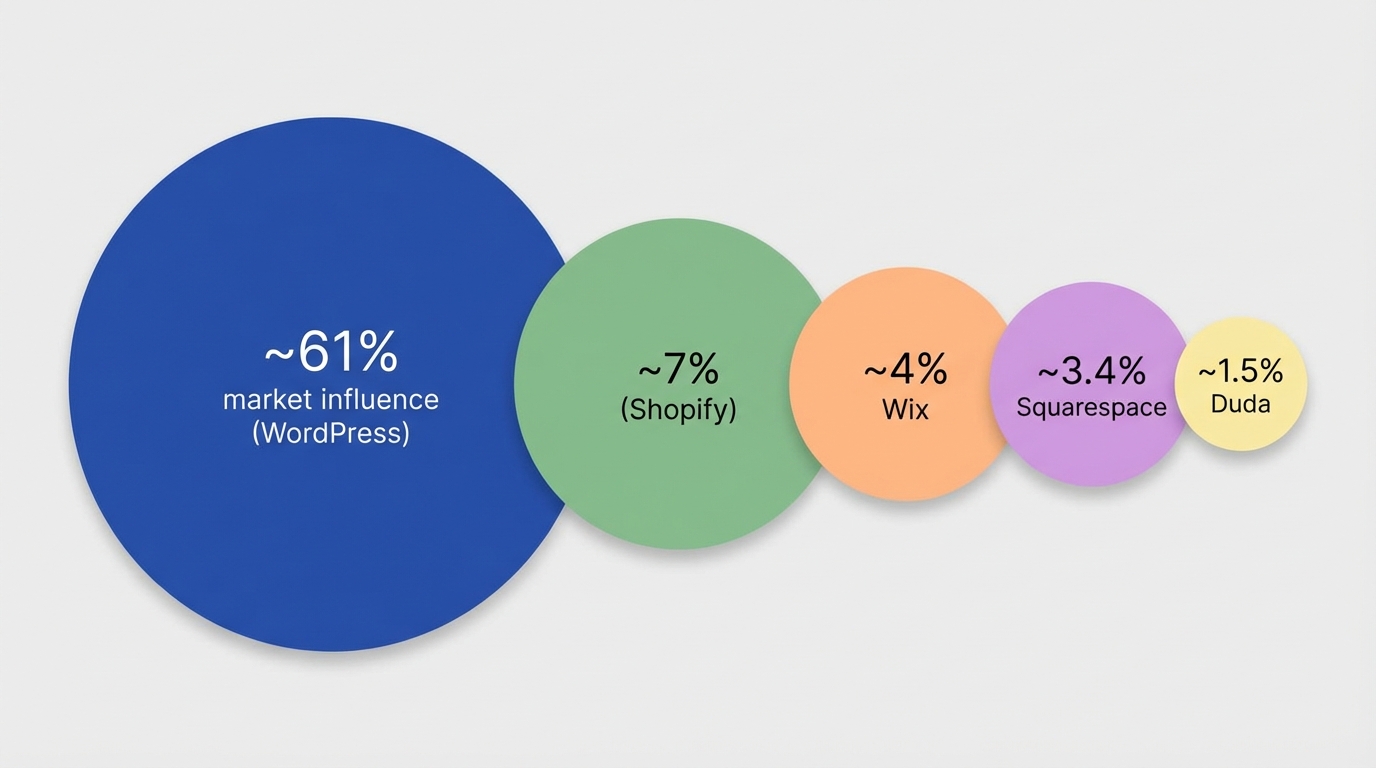

Start with the high-level market context. WordPress remains dominant, but platform dynamics shifted markedly in recent years. W3Techs reports WordPress controls roughly 60.7 percent of the CMS market among tracked sites. Yet Shopify and Wix grew fast as firms pursue managed environments. For SEO teams this matters because platform architecture defines what you can and cannot change.

Key platform stats and comparisons

- WordPress: about 43.3 percent of all sites and 60.7 percent CMS share as of October 2025. Source: W3Techs

- Yoast SEO: runs on an estimated large share of sites, with usage patterns visible via trends data. Source: BuiltWith Trends

- Canonical tags: used on about 68 percent of desktop pages, up from 65 percent in 2024

- Meta robots: present on 47 percent of pages, up from 45.5 percent

- llms.txt adoption: valid on roughly 2.13 percent of sites; 39.6 percent of those files were autogenerated by All in One SEO

- Core Web Vitals pass rate: WordPress mobile 45 percent, Duda 85 percent, Wix 75 percent

Audit checklist

- Validate canonical tags and remove duplicate H1 patterns

- Check lazy loading and image loading defaults because 68 percent of images rely on browser defaults

- Confirm meta robots and follow directives to avoid accidental noindex or nofollow

- Inspect robots.txt and llms.txt configuration for AI crawlers and training bots

- Test Core Web Vitals and Lighthouse SEO scores across templates

Platform limitations and common traps

WordPress exposes flexibility, but it also exposes risk. Plugin conflicts or default themes can inject duplicate tags. As Chris Green warned, if you want impact at scale you must nudge platform vendors and understand defaults. Conversely Wix and Shopify provide tighter control. However those managed environments impose structural SEO limits. For example Shopify enforces URL prefixes and a redirect cap near 100,000 redirects. As a result migrations or complex site architectures can hit platform ceilings.

Opportunities for law firms

Audit results reveal pragmatic workarounds. For firms with restrictive CMSs, host critical resources on a subdomain you control. Alternatively, implement edge rules or server-side rendering to fix performance gaps. Use Yoast or All in One SEO for canonical and llms.txt automation but verify outputs first; autogenerated files can misrepresent intent. Finally document constraints and remediation steps so technical SEO remains auditable.

Tools and references

- Run Lighthouse audits: Lighthouse

- Reference robots handling best practices: Google Developers

- Check Squarespace patterns with SEOSpace resources: SEOSpace

In short, platform defaults determine much of your organic and AI visibility. Therefore audit early, document limits, and apply surgical workarounds to avoid platform-driven SEO loss.

Platform-driven SEO and AI visibility: Configure crawl strategy for GPTBot and ClaudeBot

AI crawlers determine whether your content appears in model answers and recommendations. Therefore configuring robots.txt and related files is essential. Law firms must protect sensitive pages. At the same time, they must not accidentally cut off AI discovery.

Why crawl strategy matters

- Robots.txt validity improved to approximately 85 percent, which reduces accidental crawler errors. This improvement matters because valid rules reliably guide bots. Source.

- llms.txt adoption remains low at about 2.13 percent of sites. Yet 39.6 percent of those files were autogenerated by All in One SEO. Autogenerated files can misrepresent intent. Source.

- AI crawlers such as GPTBot and ClaudeBot respect robots directives in practice. Therefore incorrect disallows can remove you from AI indexes. See guidelines and crawler lists below.

Configure robots.txt with precision

Start by placing a clean robots.txt at your site root. Then test it with crawlers. For example, Google documents robots usage and testing tools you should run during audits. Refer to the guide here: Google Robots Documentation. Moreover, use the useful rules guide to apply allow and disallow lines correctly.

Consider bot-specific user agents

- Open and managed AI crawlers use explicit user agents. As a result, you can target them in robots files. For a comprehensive list of AI crawler user agents, consult Search Engine Journal.

- Anthropic documents ClaudeBot behavior and provides instructions to block or allow its crawler. See: ClaudeBot Documentation.

Protect sensitive legal content

Do not broadly disallow crawling. Chris Green warned that cutting bot crawling might desperately impact your AI visibility. Consequently, review every noindex and disallow directive. Instead, use targeted rules for specific areas. Also, log crawler traffic to spot unexpected blocks.

Leverage llms.txt and plugin outputs carefully

- Enable llms.txt only when you can control content selection. Yoast documents llms.txt features and automation. Review their spec before enabling: Yoast llms.txt Specification and Yoast Features.

- If plugins autogenerate llms.txt, audit the entries. Autogenerated lists often include recent posts but not your legal cornerstone pages. Therefore customize entries when accuracy matters.

Technical tactics to improve AI presence

- Provide canonical, structured summaries for cornerstone pages. AI models favor canonical sources.

- Use structured data to label authorship, publication date, and legal disclaimers. This helps reliability signals.

- Allow retrieval bots to fetch resources that support answers, such as public guides, FAQs, and legal outlines.

- Monitor AI citations and query logs to detect dropped coverage. BuzzStream-style citation analysis can reveal trends.

Testing and monitoring

Test robots rules with validators and crawlers. Finally, monitor access logs for user-agent strings. Then update rules when platforms change crawler names or behavior. As a result, you protect both privacy and visibility.

In short, a precise crawl strategy protects legal content and supports AI discovery. Therefore balance blocking risky bots with enabling trusted crawlers. Do this and you preserve platform-driven SEO and AI visibility.

| Metric | Digital PR (Earned editorial coverage) | Press Releases (Syndicated distribution) | Key sources |

|---|---|---|---|

| AI search citation rate (share of news citations) | 81% of news citations in BuzzStream dataset came from original editorial content | Syndicated news made up 6.2% of news citations; syndicated press releases accounted for a small fraction of AI citations | BuzzStream Study |

| Share of full AI-citation dataset | Dominant share of AI-news citations; editorial content drives the bulk of model citations | Syndicated sources contributed around 0.9% of the full BuzzStream dataset; PRNewswire direct citations ~0.21% | BuzzStream Study |

| Impact on recommendation engines | High impact because AI systems prefer high authority, original reporting and linked context | Low impact; distributed releases are rarely cited by AI recommenders | BuzzStream Study and Digiday Analysis |

| Traditional syndication influence | Earned stories still feed syndication occasionally, but editorial pickup matters most | Syndication channels (eg Yahoo/MSN) showed limited AI citation: 0.32% of news citations and 0.04% of the full dataset | BuzzStream Study |

| Earned media vs syndicated media effectiveness | Most effective for building AI visibility, recommendation signals, and backlinks | Inefficient for AI visibility; useful for press metrics but poor ROI for AI citation goals | BuzzStream Study |

Notes

- Internal press releases and company newsroom content do appear in some AI citations: roughly 18% in ChatGPT sample and about 3% on Google AI platforms. Thus owned newsroom content has limited value compared with third-party editorial coverage. Source: BuzzStream analysis.

- Use earned outreach to secure high-authority placements. As a result editorial mentions improve both classic SEO and AI recommendation signals.

Conclusion: Secure Platform-driven SEO and AI visibility or cede rankings

Platform concentration creates real risk for law firm search strategies. WordPress, Shopify, and Wix dominate much of the CMS market, and default behaviors can limit what SEO teams change. As a result firms that ignore platform defaults expose core pages to indexation gaps, duplicate content, and performance penalties. Therefore audit platforms early and map constraints to remediation steps.

AI visibility depends on crawl signals and editorial recognition. Robots and llms.txt rules determine whether GPTBot and ClaudeBot can access your material. Moreover AI systems heavily favor original editorial reporting when citing news and topic experts. Consequently earned media remains the primary route to AI citations and recommendation placements. Syndicated press releases rarely move the needle for AI-driven answers, so prioritize outreach that creates third-party mentions and links.

Small and mid-sized law firms can win against larger rivals by applying surgical technical SEO and focused PR. First, run CMS and template audits to confirm canonical, meta robots, and Core Web Vitals are correct. Second, apply precise robots rules that allow trusted retrieval bots while protecting privileged sections. Third, pursue earned editorial placements with a research and data-driven pitch. These tactics together improve classic organic rankings and AI recommendation chances.

<pPractically, prioritize actions that scale. Track llms.txt and robots changes in version control. Automate Lighthouse and CWV audits across environments. Also measure AI citation trends to validate PR outcomes. Over time these practices compound because AI recommenders and search engines prefer consistent, authoritative sources.

Case Quota partners with law firms to implement these Big Law strategies at scale. Visit Case Quota to learn how specialized legal marketing teams convert technical audits, crawl strategies, and earned outreach into measurable market share gains. For firms seeking dominance, leverage these insights and move faster than competitors.

In short, platform-driven SEO and AI visibility are not optional. Audit your CMS, protect AI crawl access, and invest in earned editorial coverage. Do this and your firm will control discovery across classic search and AI recommendation systems.

Frequently Asked Questions (FAQs)

Can my CMS choice hurt law firm SEO and AI visibility?

Yes. Platform defaults can block indexation or add duplicate tags. For example, some templates add multiple H1 tags or lazy-load images poorly. Therefore audit canonical tags, meta robots, and Core Web Vitals. Also document any platform constraints so you can build workarounds.

Should we block GPTBot or ClaudeBot in robots.txt to protect client data?

Not by default. Blocking bots broadly can remove you from AI indexes. Instead, use targeted rules to protect private pages. Moreover log crawler traffic and review user agents before changing rules. As a result you balance privacy and AI discovery.

What is llms.txt and should we enable it?

llms.txt lets site owners state training or retrieval preferences. However adoption remains low and some files are autogenerated. Therefore only enable llms.txt if you can control its entries. Also audit plugin outputs from All in One SEO or Yoast before publishing.

Do press releases help AI citations compared with earned editorial coverage?

Syndicated press releases rarely drive AI citations. BuzzStream found editorial content accounts for the majority of AI news citations. Consequently prioritize earned coverage and third-party mentions. That tactic produces more recommendation signals and backlinks.

What practical steps should law firms take this quarter?

Run a CMS audit and fix canonical and meta issues. Then test and tighten robots.txt and llms.txt settings for retrieval bots. Finally launch targeted earned outreach to secure high-authority editorial mentions. Together these steps improve both classic SEO and AI visibility.